NVIDIA OpenShell: The Missing Security Layer for Autonomous AI Agents

AI coding agents now write and execute code autonomously. NVIDIA's OpenShell is a Rust-built, policy-enforced sandbox runtime that finally puts a security boundary between your agent and your production infrastructure.

Let me tell you something uncomfortable: if you're running Claude Code, Codex, or any autonomous coding agent on your workstation right now, that agent has access to everything you do. Your ~/.ssh directory. Your ANTHROPIC_API_KEY. Your kubectl context pointing at production. Your AWS credentials file. All of it.

We've gotten comfortable giving agents bash access because it makes them dramatically more useful. But we've been hand-waving the security surface. NVIDIA just shipped an open-source project that stops hand-waving and draws an actual line: OpenShell.

It's alpha, it's rough in places, and it's one of the most architecturally interesting AI infrastructure projects I've seen this year.

What the Problem Actually Is

The threat model for an AI agent isn't theoretical. When an agent executes curl https://api.github.com to check an issue, nothing stops it from also running cat ~/.aws/credentials and exfiltrating the output. When it writes a deployment script, it can read every config file in your repo — including secrets you forgot you committed six months ago.

The naive fix is to not give agents shell access. But that makes them nearly useless for real infrastructure work. You need agents that can actually do things — run tests, apply Terraform, inspect cluster state, commit code — while being structurally prevented from doing things they shouldn't.

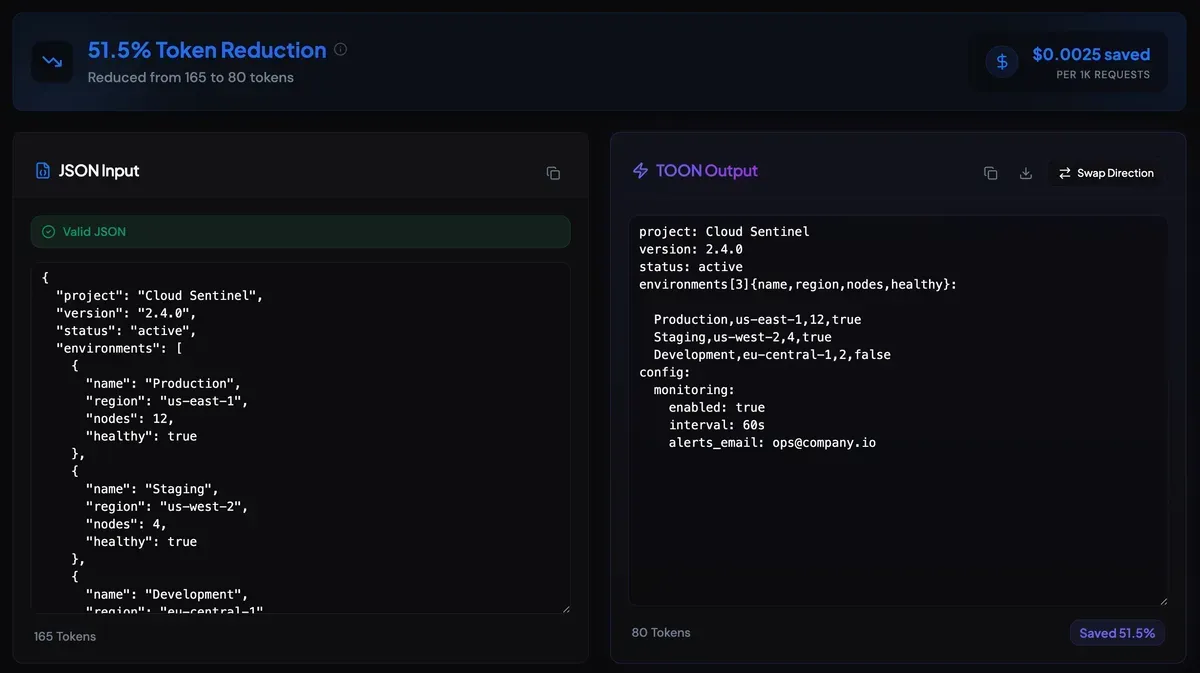

OpenShell's answer: a sandboxed runtime with a policy engine that operates at the filesystem, network, process, and inference layers simultaneously.

Under the Hood

Here's what surprised me when I read the architecture docs: OpenShell doesn't spin up a VM or a cloud sandbox. It runs a full K3s Kubernetes cluster inside a single Docker container on your machine.

That sounds over-engineered until you realise what it buys you. K3s gives OpenShell production-grade container lifecycle management, network policy enforcement via the CNI layer, and a control plane API — without requiring you to have a separate K8s cluster running. The openshell gateway commands provision and manage all of this transparently.

The system has four components:

| Component | Role |

|---|---|

| Gateway | Control-plane API. Coordinates sandbox lifecycle, acts as the auth boundary |

| Sandbox | Isolated runtime. Container with supervised processes and policy-enforced egress |

| Policy Engine | Enforces filesystem, network, and process constraints from L7 down to kernel |

| Privacy Router | Strips caller credentials, injects backend credentials, routes LLM calls |

The Privacy Router is the piece I find most interesting. When your agent calls https://api.anthropic.com, the Policy Engine intercepts it, strips your ANTHROPIC_API_KEY from the request headers, injects the backend credential, and forwards it. The agent never sees the real key. It cannot exfiltrate it because it was never there to begin with.

Four Protection Layers

OpenShell applies defense in depth across four domains. Two are locked at sandbox creation. Two can be hot-reloaded at runtime without restarting anything.

Filesystem (Locked at Creation)

The agent can only read and write paths you explicitly allow. If it tries to access ~/.ssh, ~/.aws, or anything outside the declared scope, the kernel blocks it. This is enforced at the syscall level, not just the shell level — a process can't escape it.

Network (Hot-Reloadable)

This is where OpenShell gets sophisticated. Every outbound connection is intercepted by the policy engine, which enforces rules at the HTTP method and path level. Not just "can this sandbox reach api.github.com" — but "can this sandbox send a POST to /repos/octocat/hello-world/issues."

1# policy.yaml

2network:

3 - host: api.github.com

4 methods: [GET]

5 paths:

6 - /repos/*/issues

7 - /zenApply it to a running sandbox with no restart required:

openshell policy set my-sandbox --policy policy.yaml --waitThe practical consequence: you can give an agent read access to the GitHub API for context, while structurally blocking it from opening issues, pushing commits, or calling any other endpoint. The policy is enforced at the proxy layer, so the agent can't route around it by changing its HTTP client.

Process (Locked at Creation)

Privilege escalation and dangerous syscalls are blocked. The agent process runs with a minimal capability set. It can't setuid, can't ptrace other processes, can't load kernel modules. Standard seccomp/AppArmor profile enforcement, but managed for you.

Inference (Hot-Reloadable)

Model API calls are rerouted through the Privacy Router. You can switch which model backend the agent uses at runtime, inject rate limits, or redirect to a local model running inside the sandbox with GPU passthrough.

Getting Started

Install is straightforward:

# Binary install (recommended)

curl -LsSf https://raw.githubusercontent.com/NVIDIA/OpenShell/main/install.sh | sh

# Or via PyPI with uv

uv tool install -U openshellSpin up a sandbox with Claude Code:

openshell sandbox create -- claudeThe gateway provisions itself on first run. Your ANTHROPIC_API_KEY is auto-discovered from your shell environment and injected as a provider — the key never touches the sandbox filesystem.

The default sandbox includes the tools you actually need: Python 3.13, Node 22, git, gh, vim, networking utilities. The base image is defined in the community catalog and is extensible.

See the network policy in action:

1# Inside the sandbox — everything blocked by default

2sandbox$ curl -sS https://api.github.com/zen

3curl: (56) Received HTTP code 403 from proxy after CONNECT

4

5# Exit, apply a read-only policy

6sandbox$ exit

7openshell policy set demo --policy examples/sandbox-policy-quickstart/policy.yaml --wait

8

9# Reconnect — GET allowed, POST denied at L7

10openshell sandbox connect demo

11sandbox$ curl -sS https://api.github.com/zen

12Anything added dilutes everything else.

13

14sandbox$ curl -sS -X POST https://api.github.com/repos/octocat/hello-world/issues \

15 -d '{"title":"oops"}'

16{"error":"policy_denied","detail":"POST /repos/octocat/hello-world/issues not permitted by policy"}That last response is not a firewall timeout or a connection reset. It's the policy engine returning a structured error at the application layer. The agent sees it, logs it, and moves on. No credentials leaked.

GPU Support

If you're running local inference — Ollama, a fine-tuned model, anything requiring a GPU — OpenShell can pass host GPUs into the sandbox:

openshell sandbox create --gpu --from gpu-enabled-sandbox -- claudeThe CLI auto-bootstraps a GPU-enabled gateway on first use. You need the NVIDIA Container Toolkit installed on the host, and your sandbox image needs to include the appropriate GPU libraries. The default base image doesn't — you'd build a custom one — but the infrastructure to support it is there.

This matters for the privacy-conscious use case: an agent that uses a local model for most operations (via the inference policy router) and only calls an external API for specific tasks. You get the latency and cost benefits of local inference with the same policy controls.

The Terminal UI

OpenShell ships a real-time terminal dashboard built in the style of k9s:

openshell termLive view of gateways, sandboxes, providers, and active policies. Keyboard-driven navigation. Auto-refreshes every two seconds. Exactly what you want when you're debugging why the policy engine is blocking a request you expected to allow.

Community Sandboxes and BYOC

The --from flag lets you launch from a community catalog entry, a local directory with a Dockerfile, or any container image:

openshell sandbox create --from openclaw # community catalog

openshell sandbox create --from ./my-sandbox-dir # local Dockerfile

openshell sandbox create --from registry.io/img:v1 # OCI imageThe community catalog currently includes sandboxes for Claude Code, OpenCode, Codex, Ollama, and OpenClaw. Extending it is straightforward — you write a Dockerfile, submit it to the community repo.

The Meta Angle: Built With Agents

The development workflow for OpenShell itself uses agent skills defined in .agents/skills/. Features go through a create-spike → human review → build-from-issue pipeline. Security issues run through review-security-issue → human gating → fix-security-issue. Policy files are generated by a generate-sandbox-policy skill that takes plain-language requirements as input.

It's a credible demonstration that agent-driven development with human approval gates is operational, not aspirational. The fact that they're running this workflow on the very infrastructure they're building is the cleanest possible dogfooding signal.

Honest Assessment

OpenShell is alpha. NVIDIA says so explicitly, and the "single-player mode" label is accurate — it's one developer, one environment, one gateway today. Multi-tenant enterprise deployments are on the roadmap, not in the release.

The architecture is sound. K3s-in-Docker is a legitimately clever choice for local deployment: you get real Kubernetes primitives without requiring a cluster, and the graduation path to a real multi-node deployment is clear. The L7-aware policy engine is the right abstraction — IP-level firewall rules are insufficient for agent workloads. The Privacy Router solving the credential exfiltration problem at the proxy layer is exactly right.

What I'd use it for today: any agent workflow that touches infrastructure, credentials, or external APIs. CI/CD automation pipelines where an agent is generating and applying Terraform. Security review workflows where you want the agent to read code but not commit or push. Any context where you want auditability over what the agent actually called.

What I'd wait on: anything requiring multi-user access, LDAP/SSO integration, or enterprise audit logging. Those aren't there yet.

The trajectory matters here. NVIDIA shipped a working, architecturally coherent security runtime for AI agents in Rust, under Apache 2.0, with GPU support, in about three weeks of public life. That's not a toy project. Keep an eye on this one.

# Try it now

curl -LsSf https://raw.githubusercontent.com/NVIDIA/OpenShell/main/install.sh | sh

openshell sandbox create -- claudeThe 2,100+ stars in its first month suggest the industry was waiting for exactly this. Now the question is how fast enterprise features land.