Slash LLM Costs by 60%: The Ultimate Guide to JSON Compression with TOON

Discover how TOON format reduces JSON token usage by nearly 60%. Learn how to cut API costs, optimize data transmission, and improve LLM performance today.

If you are building applications powered by Large Language Models (LLMs), you know the hidden tax of the AI revolution: Token Costs.

Every time you send a prompt to an API like GPT-4 or Claude, you are paying for every word, punctuation mark, and structural character. And if you are sending standard JSON data structures, you are likely overpaying by nearly 60%.

Standard JSON is verbose. It repeats keys for every single object, bloating your payload and burning your budget.

Today, we are introducing a solution designed specifically for the AI era: TOON (Tabular Object Orientation Notation). In this post, we'll show you how switching to TOON can reduce your token usage by 59.3%, saving your company thousands of dollars annually without losing a single byte of data.

The Problem: Why Standard JSON is Expensive for AI

JSON (JavaScript Object Notation) is the standard for web data, but it was designed for readability, not efficiency. When sending data to an LLM, readability is secondary to token count.

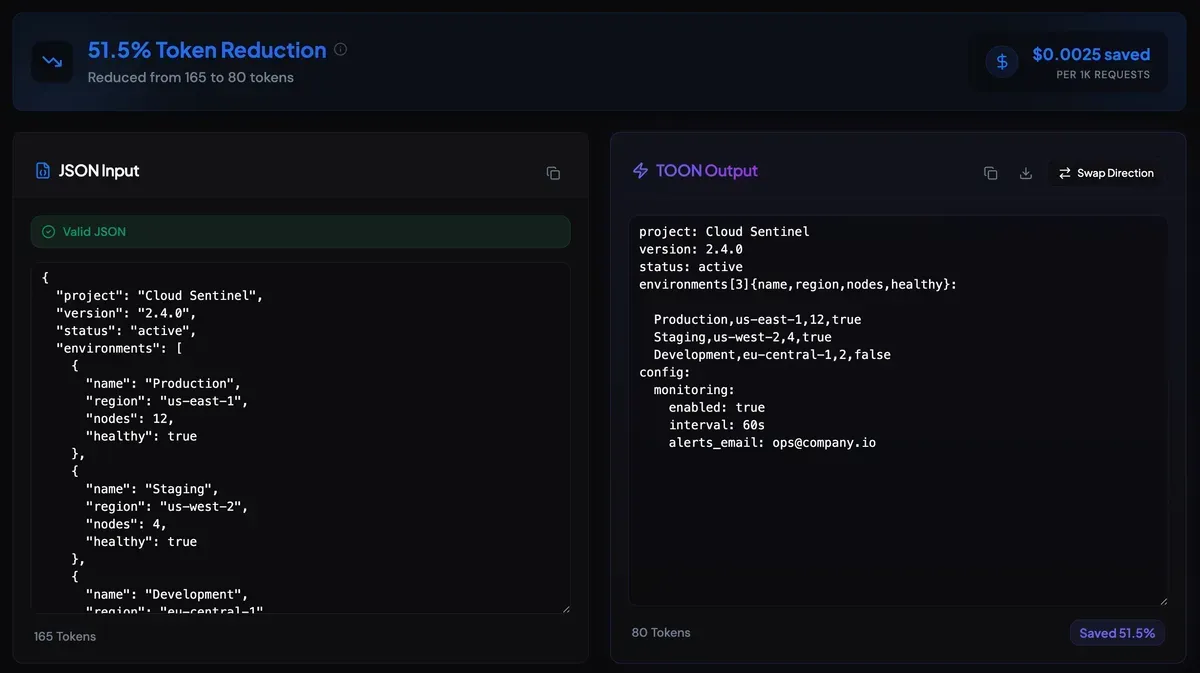

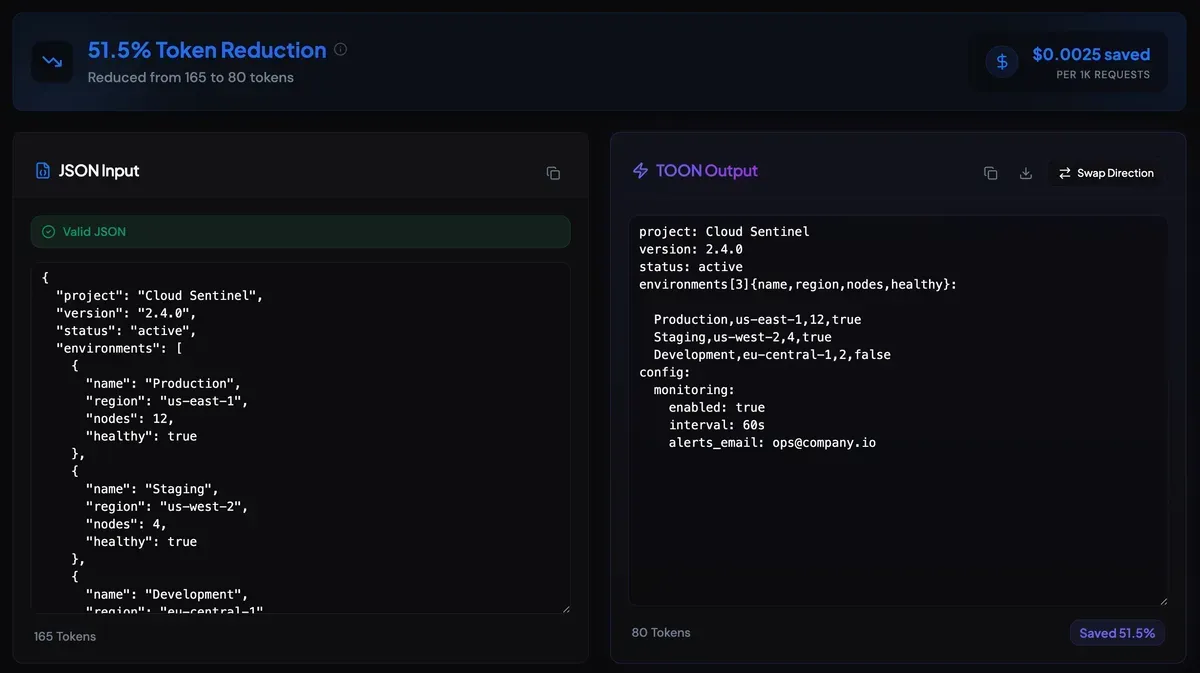

Consider this standard JSON payload containing user data:

1{

2 "project": "Cloud Sentinel",

3 "version": "2.4.0",

4 "status": "active",

5 "environments": [

6 { "name": "Production", "region": "us-east-1", "nodes": 12, "healthy": true },

7 { "name": "Staging", "region": "us-west-2", "nodes": 4, "healthy": true },

8 { "name": "Development", "region": "eu-central-1", "nodes": 2, "healthy": false }

9 ],

10 "config": {

11 "monitoring": {

12 "enabled": true,

13 "interval": "60s",

14 "alerts_email": "ops@company.io"

15 }

16 }

17}The Token Cost: This small snippet consumes 108 tokens.

Notice the repetition? The keys "id", "name", "email", "role", and "active" are repeated for every user. In a dataset of 1,000 users, you are sending those key names 5,000 times. That is wasted money.

The Solution: Introducing TOON Format

TOON eliminates redundancy by separating the schema (the keys) from the data (the values). It declares the structure once at the top, followed by compact, comma-separated rows.

Here is the exact same data converted to TOON:

1project: Cloud Sentinel

2version: 2.4.0

3status: active

4environments[3]{name,region,nodes,healthy}:

5

6 Production,us-east-1,12,true

7 Staging,us-west-2,4,true

8 Development,eu-central-1,2,false

9config:

10 monitoring:

11 enabled: true

12 interval: 60s

13 alerts_email: ops@company.ioThe Token Cost: This payload consumes only 44 tokens.

The Impact by the Numbers

| Metric | Standard JSON | TOON Format | Improvement |

|---|---|---|---|

| Token Count | 108 | 44 | 59.3% Reduction |

| Cost Per Request | $0.0032 | $0.0013 | $0.0019 Saved |

| Annual Savings (1M calls) | $3,200 | $1,300 | $1,900 Saved |

Comparison of JSON vs TOON format showing 59.3% token reduction

Comparison of JSON vs TOON format showing 59.3% token reductionHow TOON Works: A Technical Breakdown

TOON is designed to be human-readable and machine-parsable. It follows a strict syntax that LLMs can understand instantly.

1. The Header Declaration

The first line defines the array name, the count of items, and the keys.

arrayName[count]{key1,key2,key3}:

2. The Data Rows

Subsequent lines contain only the values, separated by commas.

value1,value2,value3

Why LLMs Love TOON

LLMs are trained on vast amounts of code and data. They recognize patterns easily. When you present data in a tabular format like TOON, the model spends fewer tokens "reading" the structure and more tokens "understanding" the content. This often leads to better reasoning performance alongside the cost savings.

Real-World Use Cases

Where should you implement TOON in your stack?

1. RAG (Retrieval-Augmented Generation) Context

When injecting database records into a prompt context, use TOON to fit more relevant data into the context window. You can include 2x more documents for the same price.

2. Function Calling & Tool Use

When defining schemas for agent tools, sending the output data in TOON format reduces the output token cost significantly.

3. API Payload Optimization

If you are building mobile apps or IoT devices with limited bandwidth, TOON reduces payload size, leading to faster load times and lower data usage for your end users.

Is TOON Reversible?

Yes. One of the core design principles of TOON is lossless compression.

You can convert TOON back to standard JSON instantly. This makes it perfect for:

- Storage: Save data in TOON to save database space.

- Transmission: Send data over the wire in TOON.

- Consumption: Convert back to JSON only when the client application needs it.

Try It Yourself: Free TOON Converter

We believe in transparency. We've built a free tool to help you calculate exactly how much you can save.

Simply paste your JSON, and our tool will:

- Validate your syntax.

- Convert it to TOON instantly.

- Calculate your exact token savings and dollar amount.

Conclusion: Optimization is No Longer Optional

In the early days of the web, we optimized images and minified CSS to improve speed. In the age of AI, we must optimize tokens to improve viability.

Switching to TOON is a low-effort, high-impact change. It requires no new infrastructure, no complex libraries, and no data loss. It simply makes your data leaner, faster, and cheaper.

Ready to cut your API bill?

👉 Launch the TOON Converter Tool

Frequently Asked Questions (FAQ)

Q: Does TOON support nested objects?

A: Yes! TOON supports nested objects and arrays. Complex structures are automatically optimized using indented keys and tabular representations for uniform arrays, ensuring maximum token efficiency across your entire data model.

Q: Is TOON secure?

A: Yes. TOON is a text-based format just like JSON. It does not execute code and carries the same security profile as standard text data.

Q: Can I use TOON with Python or JavaScript?

A: Absolutely. Because TOON is text-based, you can parse it in any language using standard string manipulation or regex. We are working on native libraries for Python and Node.js.