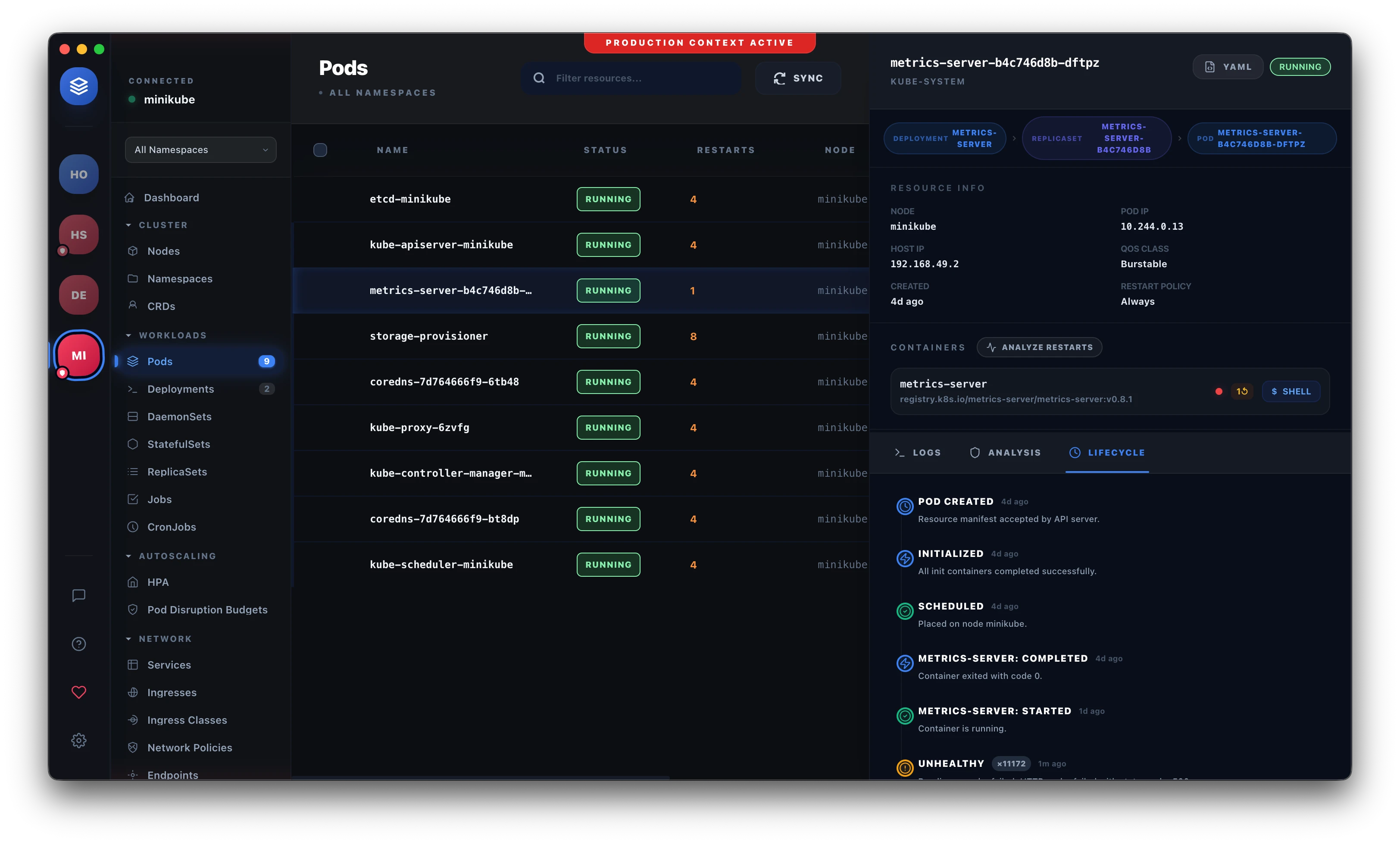

How We Built a Real-Time Kubernetes UI Using Informers

Polling the Kubernetes API every few seconds is a trap. Here's how we used informers to build a UI that reflects cluster state instantly — and what broke along the way.

When we started building Podscape, the first version polled the Kubernetes API every three seconds. It worked. It was also wrong — stale state between polls, unnecessary load on the API server, and the UI felt sluggish compared to what engineers expected from a native tool.

The fix was informers. This is what we learned building a production Kubernetes UI on top of them.

The Problem With Polling

Every kubectl get pods --watch you've ever run is doing something smarter than your typical dashboard. It's not refreshing. It's watching.

Polling has three real problems for a UI:

Staleness. A pod crashes at t=0. Your poll fires at t=2.9s. The user is looking at stale state for nearly three seconds. In an incident, that's three seconds of confusion.

API server load. Multiply your poll interval by your user count and your resource types. A small dashboard hitting pods, deployments, services, events, and nodes every three seconds across ten clusters is making thousands of requests per minute to API servers that have real work to do.

Noise. Polling gives you the current state, not what changed. You either diff everything client-side or you miss transitions entirely — a pod that crashed and restarted between two polls looks healthy.

Informers solve all three.

What Informers Actually Are

An informer in client-go is a combination of three things:

- A List call — fetch the current state of all resources on startup

- A Watch stream — keep an HTTP/2 connection open and receive events as resources change

- A local in-memory cache — so your application reads from local state, not the API server

The key insight: after the initial list, your application never hits the API server again for reads. It reads from a local cache that's kept current by the watch stream. This is how kubectl get with --watch is so fast — and it's how we built Podscape.

go1import (

2 "k8s.io/client-go/informers"

3 "k8s.io/client-go/tools/cache"

4)

5

6factory := informers.NewSharedInformerFactory(clientset, 0)

7podInformer := factory.Core().V1().Pods()

8

9podInformer.Informer().AddEventHandler(cache.ResourceEventHandlerFuncs{

10 AddFunc: func(obj interface{}) {

11 pod := obj.(*v1.Pod)

12 broadcastPodEvent("ADDED", pod)

13 },

14 UpdateFunc: func(oldObj, newObj interface{}) {

15 pod := newObj.(*v1.Pod)

16 broadcastPodEvent("MODIFIED", pod)

17 },

18 DeleteFunc: func(obj interface{}) {

19 pod := obj.(*v1.Pod)

20 broadcastPodEvent("DELETED", pod)

21 },

22})

23

24stopCh := make(chan struct{})

25factory.Start(stopCh)

26factory.WaitForCacheSync(stopCh)

factory.WaitForCacheSync is the part everyone forgets. Without it, your handlers can fire before the initial list is complete and you'll serve partial state.

SharedInformer vs. Individual Informers

The Shared in SharedInformerFactory matters. If you naively create one informer per controller per resource type, each one opens its own watch connection. For a UI watching pods, deployments, replicasets, services, events, nodes, ingresses, and configmaps — that's eight watch streams per cluster, per user.

SharedInformerFactory ensures a single watch stream per resource type regardless of how many parts of your application are listening. Multiple event handlers share one stream.

go// Both of these share a single watch connection for pods podInformer1 := factory.Core().V1().Pods().Informer() podInformer2 := factory.Core().V1().Pods().Informer() // same underlying informer

In Podscape, we register multiple handlers on the same informer — one to update the in-memory cache, one to push events to connected frontend clients via IPC.

Bridging to the Frontend

Informers run in your backend process. Getting events to the UI is a separate problem. In Podscape (an Electron app), we use Electron's IPC to push events from the Go backend to the renderer process:

go1// Go backend sends events over a WebSocket to the Electron main process

2func broadcastPodEvent(eventType string, pod *v1.Pod) {

3 event := PodEvent{

4 Type: eventType,

5 Name: pod.Name,

6 Namespace: pod.Namespace,

7 Phase: string(pod.Status.Phase),

8 Ready: isPodReady(pod),

9 Restarts: getRestartCount(pod),

10 Node: pod.Spec.NodeName,

11 Age: pod.CreationTimestamp.Time,

12 }

13 eventBus.Publish("pod.changed", event)

14}

The frontend subscribes to pod.changed and updates React state. The result is a UI that reflects reality within milliseconds of a change happening in the cluster — without a single poll.

The Resync Interval

The 0 in NewSharedInformerFactory(clientset, 0) is the resync period. Setting it to zero disables periodic resync. A non-zero value (e.g., 30 * time.Second) triggers UpdateFunc for every object in the cache at that interval, even if nothing changed.

We initially set it to 30 seconds thinking it was a safety net. It generated thousands of spurious update events that hammered our event bus and caused the UI to re-render constantly. Set it to zero unless you have a specific reason not to.

go// Resync every 10 minutes as a safety net — not every 30 seconds factory := informers.NewSharedInformerFactory(clientset, 10*time.Minute)

If you need a resync, 10 minutes is a more reasonable floor for a UI.

Namespace Scoping

By default, SharedInformerFactory watches all namespaces. For large clusters this means your informer caches every pod across every namespace. On a cluster with thousands of pods across 50 namespaces, this is a lot of memory and a lot of events.

Scope to the namespace the user is currently viewing:

gofactory := informers.NewSharedInformerFactoryWithOptions( clientset, 10*time.Minute, informers.WithNamespace("production"), )

In Podscape we maintain a factory per (cluster, namespace) pair and tear them down when the user switches context. This keeps memory bounded and avoids processing events the user doesn't care about.

What Goes Wrong in Production

Watch connection drops silently. Kubernetes API servers close watch connections after a timeout (typically around 5 minutes). client-go handles reconnection automatically, but it re-lists first. If your list takes 10 seconds on a large cluster, your UI goes stale during that window. We surfaced a "reconnecting..." indicator in Podscape rather than silently serving potentially stale state.

ResourceVersion tracking breaks on API server restart. The watch stream relies on ResourceVersion to resume from where it left off. If the API server restarts and prunes its event history, the client gets a 410 Gone and must restart from a fresh list. client-go handles this, but you'll see a spike in memory allocation as it re-lists. Watch your memory metrics after cluster upgrades.

DeleteFunc receives a tombstone, not the object. When an informer misses a delete event (because it was reconnecting), it delivers a cache.DeletedFinalStateUnknown tombstone instead of the actual object. Type-asserting directly to *v1.Pod panics:

go1// Wrong — panics on tombstone

2DeleteFunc: func(obj interface{}) {

3 pod := obj.(*v1.Pod) // panic: interface conversion fails

4}

5

6// Right — handle tombstone

7DeleteFunc: func(obj interface{}) {

8 pod, ok := obj.(*v1.Pod)

9 if !ok {

10 tombstone, ok := obj.(cache.DeletedFinalStateUnknown)

11 if !ok {

12 return

13 }

14 pod, ok = tombstone.Obj.(*v1.Pod)

15 if !ok {

16 return

17 }

18 }

19 broadcastPodEvent("DELETED", pod)

20}

This is documented in client-go but easy to miss. It will eventually panic in production.

Events resource is noisy. Kubernetes Events are a high-volume resource. A single pod crash can generate dozens of events in seconds. We filtered events to Warning type and deduplicated by reason + involvedObject.name before broadcasting to the frontend. Without this, the UI update queue backs up during incidents — exactly when you need it to be fast.

Multi-cluster connection management. Informers don't know about cluster identity. When you're watching three clusters simultaneously, your event handlers need to tag events with the cluster they came from. We learned this the hard way after shipping a version where pod events from cluster B were updating the pod list for cluster A.

Key Takeaways

- Use

WaitForCacheSyncbefore serving any state — partial cache is worse than no cache - Set resync to zero or a long interval (10+ minutes) — periodic resync is not a free safety net

- Always handle the tombstone case in

DeleteFunc— it will panic in production eventually - Scope informers to the namespace the user is watching — all-namespace watching doesn't scale

- Tag every event with cluster identity before it enters your event bus if you're multi-cluster